A new image protection tool was designed to poison AI programs that are trained using unauthorized data, giving creators a new way to safeguard their pieces and harm systems they say are stealing their works.

Nightshade, a new tool from a University of Chicago team, puts data into an image’s pixels that damage AI image generators that scour the web looking for pictures to train on, causing them to not work properly. An AI program might interpret a Nightshade-protected image of a dog, for example, as a cat, a photo of a car could be seen as a cow, and so on, causing the machine to malfunction, according to the team’s research.

A visitor takes a picture with his mobile phone of an image designed with artificial intelligence by Berlin-based digital creator Julian van Dieken inspired by Johannes Vermeer’s painting “Girl with a Pearl Earring” at the Mauritshuis museum in The Hague on March 9, 2023. Julian van Dieken’s work made using artificial intelligence is part of the special installation of fans’ recreations of Johannes Vermeer’s painting “Girl with a Pearl Earring” on display at the Mauritshuis museum. (SIMON WOHLFAHRT/AFP via Getty Images)

WHAT IS ARTIFICIAL INTELLIGENCE (AI)?

“Nightshade’s purpose is not to break [AI] models,” Ben Zhao, the University of Chicago professor heading the Nightshade team, wrote. “It’s to disincentivize unauthorized data training and encourage legit licensed content for training.”

“For models that obey opt-outs and do not scrape, there is minimal or zero impact,” he continued.

ARTIST SUES AI IMAGE GENERATORS FOR ALLEGEDLY USING HER WORK TO TRAIN BOTS:

WATCH MORE FOX NEWS DIGITAL ORIGINALS HERE

Text-to-image AI generators like Midjourney and Stable Diffusion create pictures based on users’ prompts. The programs are trained using text and images from across the internet and other sources.

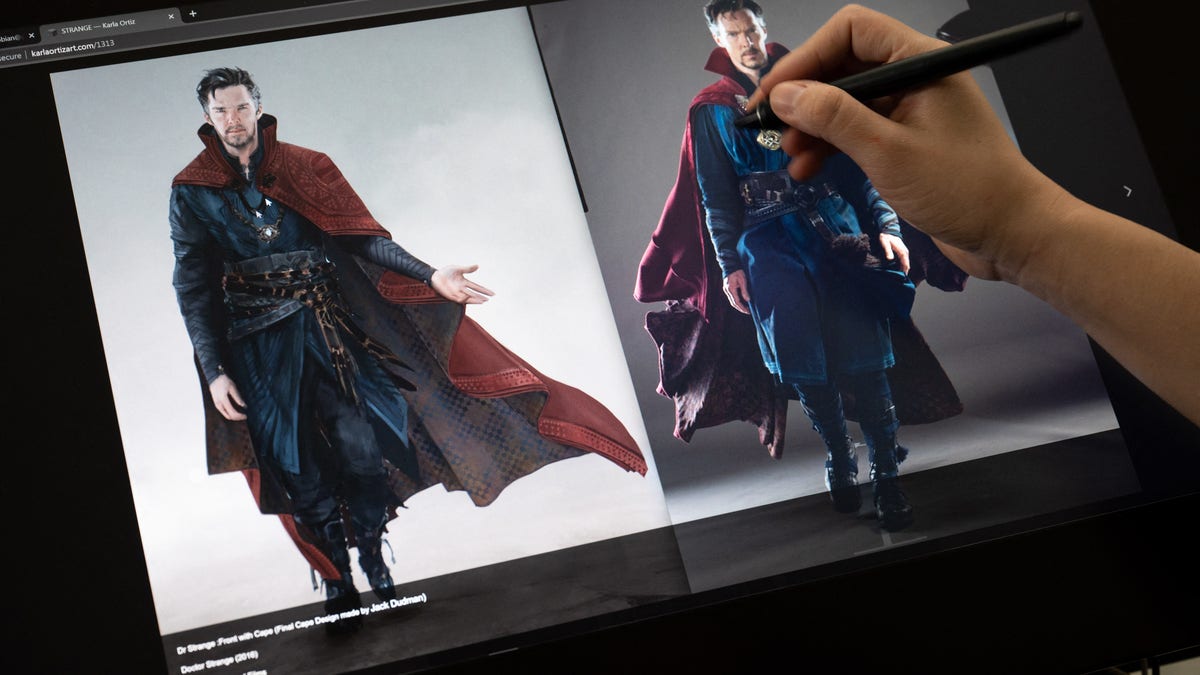

Karla Ortiz, a San Francisco-based fine artist, who says her artwork was used to train the tech, filed a lawsuit in January against Midjourney and Stable Diffusion for copyright infringement and right of publicity violations. The defendants moved to dismiss the case in April, but the district judge overseeing the case allowed the plaintiffs to submit a new complaint after a July hearing.

“It feels like someone has taken everything that you’ve worked for and allowed someone else to do whatever they want with it for profit,” Ortiz told Fox News in May. “Somebody is able to mimic my work because a company let them.”

An artificial intelligence artist who goes by the name “Rootport” wears gloves to protect his identity and demonstrates how he produces AI art. (RICHARD A. BROOKS/AFP via Getty Images)

THE LAST LAUGH: HOW COMEDIANS PLAN TO TURN THE TABLES ON AI SCRAPING THEIR MATERIAL

Another plaintiff in the lawsuit, Nashville-based artist Kelly McKernan, began noticing imagery online similar to their own that was apparently created by entering their name into AI image engines last year.

“At the end of the day, someone’s profiting from my work,” McKernan said. “We’re David against Goliath here.”

In an effort to fight back against AI machines hijacking their artistic styles, McKernan and Ortiz collaborated with Zhao and the University of Chicago team on another art-protecting project called Glaze, a “system designed to protect human artists by disrupting style mimicry,” according to its website.

When Glaze, Nightshade’s predecessor, is applied to an image, it alters how AI machines interpret the picture without changing the way it looks to humans. But unlike Nightshade, Glaze doesn’t cause the model to malfunction.

Illustrator and content artist Karla Ortiz poses in her studio in San Francisco, California. (AMY OSBORNE/AFP via Getty Images)

HOW DEEPFAKES ARE ON THE VERGE OF DESTROYING POLITICAL ACCOUNTABILITY

Still, artists face challenges protecting their works from AI.

“The problem, of course, is that these approaches do nothing for the billions of pieces of content already posted online,” Hany Farid, a professor at the University of California, Berkeley’s School of Information, told Fox News in a statement. “The other limitation with this type of approach is that when it gets hacked — and it will — creators will have posted their content and won’t have protection.”

“That is, it creates a somewhat false sense of protection,” he continued.

No major AI image generators, however, have hacked Nightshade, Zhao said.

Illustrator and content artist Karla Ortiz’s website displays a design and illustration she created for the movie Doctor Strange, in San Francisco, California, on March 8, 2023. Ortiz and two other artists have filed a class-action lawsuit against companies with art-generating services. (AMY OSBORNE/AFP via Getty Images)

CLICK HERE TO GET THE FOX NEWS APP

“We are not aware of any techniques that can detect it,” he wrote on X. “If/when we find any, we’ll update Nightshade to evade it.”

The University of Chicago team, Stability AI and Midjourney did not return requests for comment.

To watch the full interview with Ortiz, click here.